For the past years, we have seen a growth in OSINT investigations and they have played a pivotal role in raising accountability and showing journalists, human rights investigators and government officials, among others, on the potential of using open source information in their activities. However, as the community grows and more and more people are conducting OSINT investigations, it is natural that more mistakes are made. As na OSINT Curious person, what kind of biases should you be aware and self-checking on as you do your investigation? Here are 5 that I would put on the top of my list.

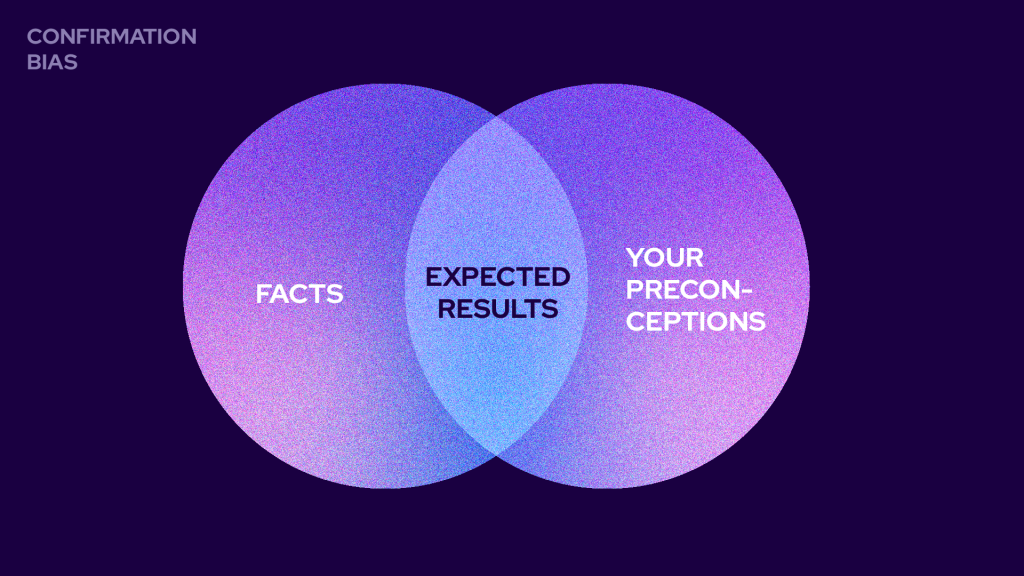

Confirmation Bias

Confirmation bias is our tendency to interpret, analyse, remember and prioritize information that supports our existing perspective on a topic. This means that when we have a pre-existing idea about something on the start of our OSINT investigation, we may tend to search, collect and focus more in information that consolidates that starting opinion, almost in a self-fulfilling prophecy.

Examples:

This may happen, for example, if you are trying to assess if a certain person has become radicalized to a certain extreme political or religious ideology. Maybe you will go through their social media profiles looking for clues of that connection and ignoring, for example, evidence, that in fact the person is just emotionally unstable, and that every other week they “radicalize” about something different. So you may start with the idea that Stuart is an ISIS fan, and find evidence to support that based on his activity last week, but this confirmation bias may lead you to ignore that he was a Neo-Nazi the month before that, information that would completely change your assessment.

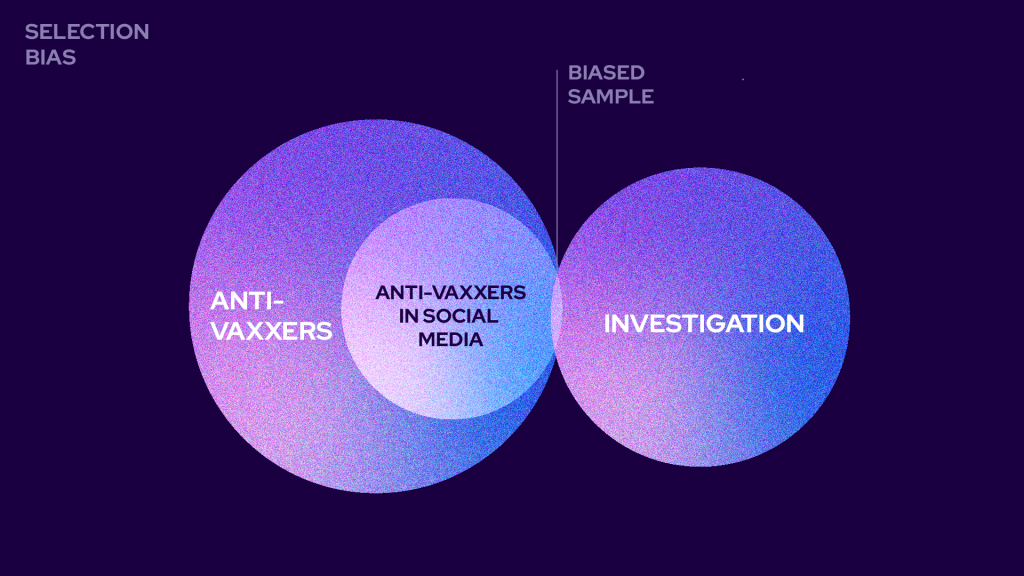

Selection Bias

A selection bias happens when the choice on the group you are collecting data from is not random and therefore, not representative of the group you want to analyse. Very often, in OSINT, this bias can come from availability (see below) or other factors that tamper with the selection of cases.

Examples:

When doing OSINT, limitation on itself in open source publicly available data mas cause a selection when conducting an assessment on a community, for example, anti-vaxxers in a certain location, since the presence in social media is a filter by itself, or some social media platforms / profiles are easier to explore than others. Sometimes, the filter we use on a research may also lead to a selection, for example, employees of a certain company via LinkedIn, therefore excluding all of those who don’t have LinkedIn or don’t have that data publicly available in their profile.

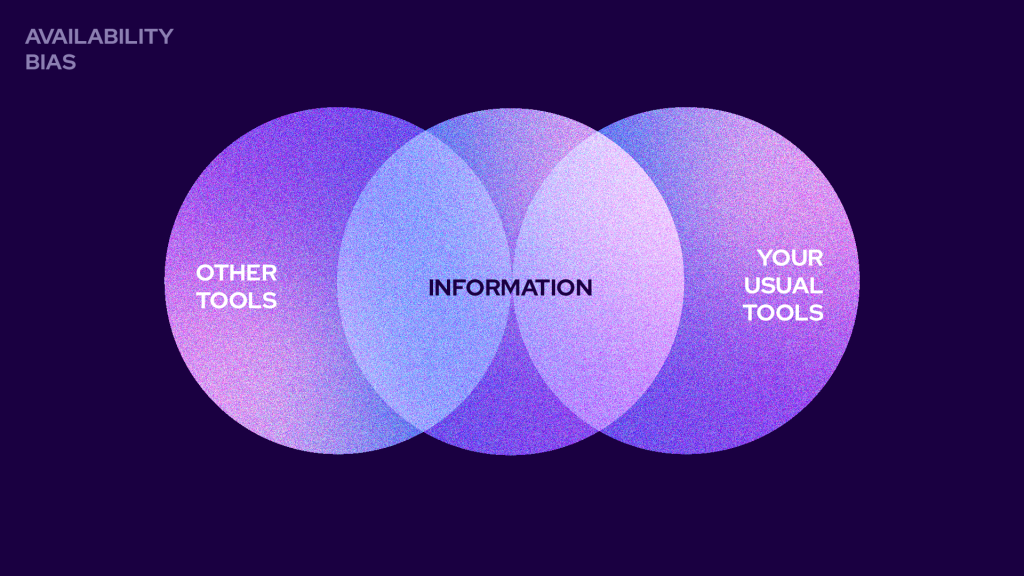

Availability Bias

Availability bias comes from our tendency to resort first to easily accessible or immediate examples when considering a topic. This means we tend to ignore the remaining reality focusing mostly in what we recall or have easy access, is more recent of frequent, or more extreme and emotional.

Examples:

In OSINT it is difficult to keep up to all tools and techniques so it is normal that we resort and tend to repeat what works, even though it may compromise the variety of results. We may have worked recently with a certain technique or social media platform and overuse it, or we may overestimate the chances or importance of a tool or data set that our community has been focusing on recently.

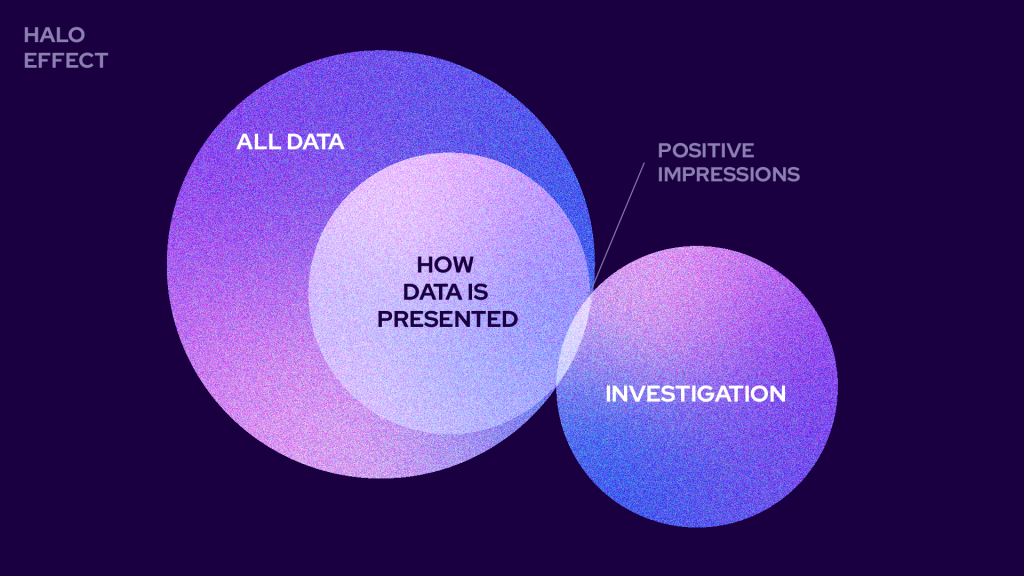

Halo Effect

The Halo Effect is a bias that makes us build positive impressions on something or someone based solely on a single trait, transferring that good evaluation to other characteristics. It is one of the explanations to why people perceived as better looking get lower sentences from jurors.

Examples:

In OSINT, you probably remember several situations where the Halo Effect can be applied, specially in Socmint and already often analysing a “curated” view of a person’s life. In Social Engineering operations, very often this ‘Halo Effect’ is also explored to lower other user’s guard, choosing pictures for fake profiles that transmit positive traits.

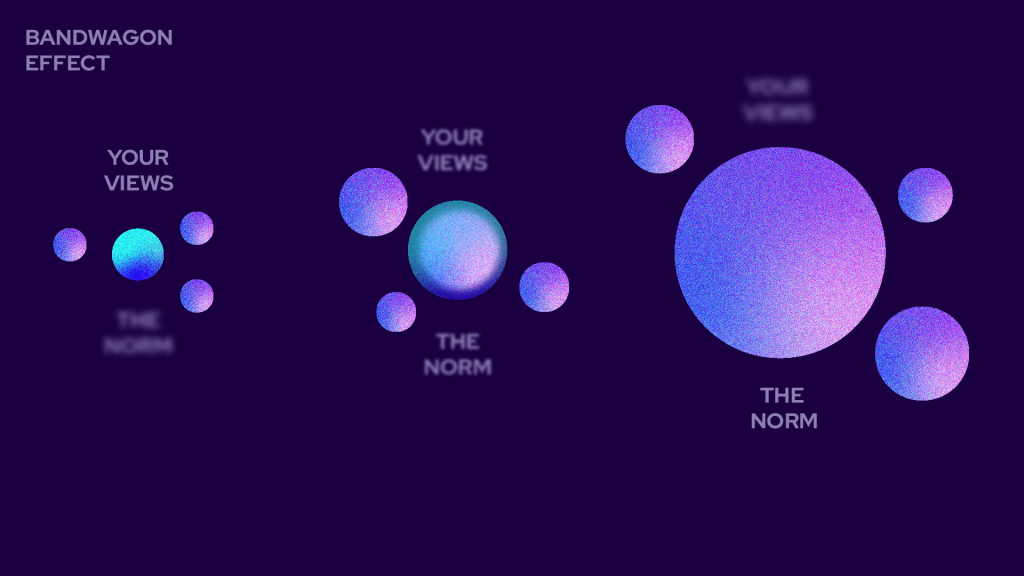

Bandwagon Effect

As the name implies, the bandwagon effect is when we decide primarily based on observing what others are doing, and repeating this behaviour, although we may have a different view.

Examples:

In OSINT, if you are doing an investigation on a topic that other colleagues of yours have already made a decision about, it may be difficult to interpret the results differently, even if you may have elements that point in a different direction. Once again, if all your colleagues believe Stuart is a dangerous ISIS radical it may be difficult for you to defend it may be a honeypot profile from a Law Enforcement agency, even if you have compelling evidence to that.

How can I fight these biases?

We can never completely eliminate our biases but can learn how to manage them by promoting critical thinking and implementing work practices like:

- Decision making logs on the different stages of your investigation that build up to make your investigation replicable and accessible to others.

- Peer review of your investigations by others.

- Working as a team on investigation or separately on the same investigation and comparing different results, reviewing the logs and comparing decision making and correcting work processes.

- Use different tools and methods to find, collect and analyse data, try to experiment and keep updated with different things, so you don’t repeat the same processes over and over again.

- Have a look at the data and the conclusions you are taking. Do I have enough data from different sources, or is this the same information just scattered in different online places? Have I weighed in the elements that support my conclusion on to those who contradict it?

- When sharing your investigation make it as transparent and open as possible, considering privacy and compromise of methods and sources, so that others can review it properly.

- Do not exclude elements that contradict your main conclusion.

- Write using specific terms that make your doubts and degree of certainty clear as well as the number / quality of elements that you collected to support this conclusion.

- Be open to criticism and review your own working considering these and other bias.